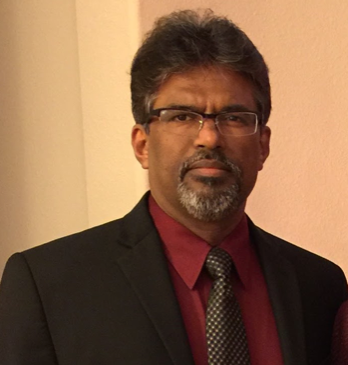

Speaker: Lakshman Tamil (University of Texas at Dallas – USA)

Date: Thursday, April 23, 2026

Time: 09:00-10:00 (Central Time)

Duration: 1 hour

Room: Room 129

Abstract:

Artificial intelligence is poised to fundamentally reshape healthcare—expanding access to diagnosis, enhancing clinical accuracy, and enabling truly personalized care. Yet this promise is tempered by a persistent challenge: healthcare data are often scarce, imbalanced, and biased, reflecting systemic disparities in infrastructure, access, and representation. Addressing these limitations is critical to developing AI systems that are not only technically robust, but also trustworthy, equitable, and clinically meaningful. This keynote will trace the evolution of AI in healthcare, from early rule-based and statistical methods to today’s advanced machine learning and deep learning paradigms. Drawing on our work in breast, lung, and oral cancer detection, the speaker will illustrate how AI can address pressing gaps in early diagnosis—shifting the focus from benchmark-driven performance to real-world clinical impact.

Equally important is how these systems are delivered. We will discuss deployment models that align with clinical workflows, including cloud-based, on-premises, embedded, and web-enabled platforms. These approaches are supported by advances in computational infrastructure—from CPUs, GPUs, and FPGAs to emerging hybrid classical–quantum and quantum computing paradigms, enabling scalable and efficient healthcare solutions. Finally, we will explore the broader ecosystem required for global adoption, including model security, blockchain-enabled traceability, and compliance with regulatory frameworks such as FDA approval and CE marking. At its core, this talk emphasizes that equity must be a guiding principle in healthcare AI, ensuring that innovation extends beyond well-resourced environments to benefit underserved populations worldwide.

Biography:

Dr. Lakshman Tamil is a distinguished academic, entrepreneur, and inventor specializing in innovative healthcare technologies. He is a professor and Director of the Quality of Life Technology Laboratory at the University of Texas at Dallas, where he leads interdisciplinary research in IoT, quantum and classical machine learning, and their applications in healthcare. He also co-founded MedCognetics, Inc., a UT Dallas spin-off focused on commercializing medical AI innovations. Dr. Tamil holds 25 U.S. patents and has authored over 160 research papers and 8 book chapters. He has guided 25 Ph.D. students and is a Fellow of the National Academy of Inventors, OPTICA, and the Electromagnetics Academy. He received UT Dallas’ Best Teaching Award in 2022. Previously, he founded Yotta Networks and held leadership roles at Alcatel (now Nokia), where he helped pioneer the world’s first all-optical IP router. His mission is to advance intelligent systems that personalize healthcare, enhance chronic disease management, and reduce costs.

Speaker: Xiaowen Gong (Auburn University – USA)

Date: Friday, April 24, 2026

Time: 08:45-09:45 (Central Time)

Duration: 1 hour

Room: Room 129

Abstract:

As an emerging topic that has received tremendous research in the past few years, federated learning (FL) has numerous promising applications in networked intelligent systems, such as connected and autonomous vehicles, and collaborative robots. Existing works on FL often impose some constraints, including that clients (agents) interact with homogeneous environments, clients participate in FL with some particular pattern (such as balanced participation), and/or in a synchronous manner, and/or with the same number of local iterations, while these constraints can be hard to meet in practice.

In this talk, we will present our recent research on flexible FL (AFL), which gives much freedom to clients, allowing them to participate in FL flexibly and efficiently with heterogeneous environments and/or heterogeneous and time-varying computation and communication capabilities. In particular, we will discuss three studies in this research direction: (1) federated reinforcement learning (FRL) where clients interact with heterogeneous environments; (2) bilevel optimization based FL where clients have anarchic participation behaviors; and (3) FL based on a decentralized structure where clients have anarchic participation behaviors. We will talk about various challenges in the algorithm design and convergence analysis of the AFL algorithms in these studies, and the corresponding techniques that address these challenges. We will also discuss future research directions.

Biography:

Xiaowen Gong is currently a Godbold Associate Professor in the Department of Electrical and Computer Engineering (ECE) at Auburn University, Alabama, USA. He received his PhD degree in Electrical Engineering from Arizona State University (ASU) in 2015. From 2015 to 2016, he was a postdoctoral researcher in the Department of ECE at The Ohio State University. His research interests are in the areas of wireless networks and their applications, with current focuses on machine learning and AI in wireless networks. He received IEEE Internet of Things Journal Best Paper Runner-up Award in 2022 as a co-author, IEEE INFOCOM 2014 Runner-up Best Paper Award as a co-author, ASU ECEE Palais Outstanding Doctoral Student Award in 2015, and NSF CAREER Award in 2022. He has served as an Associate Editor for IEEE/ACM Transactions on Networking, IEEE Transactions on Wireless Communications, a Guest Editor for IEEE Transactions on Network Science and Engineering, and a Lead Guest Editor for IEEE Open Journal of the Communications Society.

Speaker: Chris Crawford (University of Alabama – USA)

Date: Friday, April 24, 2026

Time: 13:30-14:30 (Central Time)

Duration: 1 hour

Room: Room 129

Abstract:

Brain-Computer Interface (BCI) systems convert Central Nervous System (CNS) activity to artificial output that is then used to replace, restore, enhance, supplement, or improve natural CNS output. BCI functions by acquiring brain signals, identifying patterns, and producing actions based on the observed patterns. This process allows users to interact with their environment without having to use their peripheral nerves and muscles. Outputs produced by a BCI system can be used to interact with applications ranging from wheelchairs to video games. In this talk, Dr. Crawford will discuss his physiological computing education research that examines the use of BCI to engage students with STEM. This presentation will also cover his work investigating the integration of block-based programming (similar to scratch) with sensors that measure muscle and brain activity.

Biography:

Dr. Chris Crawford is an Associate Professor in the Department of Computer Science at the University of Alabama, Tuscaloosa, Alabama. He directs the Human-Technology Interaction Lab (HTIL). His research focuses on human-robot interaction and Brain-Computer Interfaces (BCIs). He has investigated systems that provide computer applications and robots with information about a user’s cognitive state. He previously developed a brain-drone racing system that was featured on over 800 news outlets, including Discovery, USA Today, the New York Times, and Forbes. Along with investigating brain-robot interaction applications, Dr. Crawford also developed Neuroblock, a tool designed to engage K-12 students in neurofeedback applications development. He recently won a NSF CAREER award for his research.